Growth Hacking was an awful idea

By Mr. Market

Brand has historically been considered an imprecise, flailing effort, only necessary once you hit a certain size and even then only vaguely impactful.

But in the past six months, the studio I work at has seen an explosion in new interest, and our client portfolio now includes several companies people might assume are far too rational and PLG-focused to bother with brand. What's spurred these companies to suddenly make investments in wishy-washy concepts like trust, brand, and community?

IMO, it's that the growth hacking era backfired massively, and observant companies are now scrambling to undo years of bad-faith business tactics.

This post covers how we got here and how businesses can get out.

I. Growth dogma

First, where we went wrong.

Ideally, growth is the natural result of building something useful that people want and trust.

But in tech, 'growth' has come to mean extracting the maximum possible usage, attention, and revenue from as many users as possible, preferably very quickly. When this is your goal, aggressive distribution, below-cost pricing, and dark patterns seem like a great idea. And for a while, it was.

But the conditions that made growth hacking effective no longer exist.

Ten or fifteen years ago, markets were fragmented, users were unsophisticated, switching costs were high, and companies faced little public accountability. None of that is true today.

Growth maximalism has created a world where feature parity is the norm, switching costs are near zero across several categories, and founders are as visible as celebrities that the public can analyze at length.

II. How optimizing for time-to-value went wrong

One of the core ideas behind growth-driven software is that you should reduce friction wherever you can. Onboarding should be fast and the UI should fit like a glove. You want someone to settle into your tool as comfortably as they would an old easy chair.

Individually, this is great product design. Collectively, it has created full categories so samey there are almost no switching costs. The instinctive familiarity and ease of use that makes onboarding fast also makes it trivial to ditch a product for its competitor.

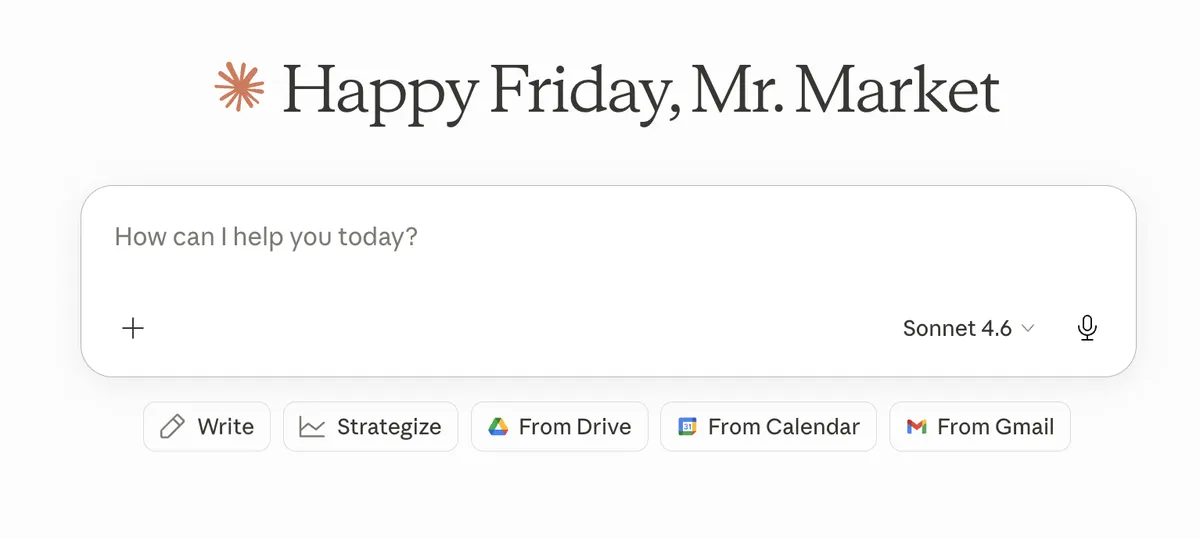

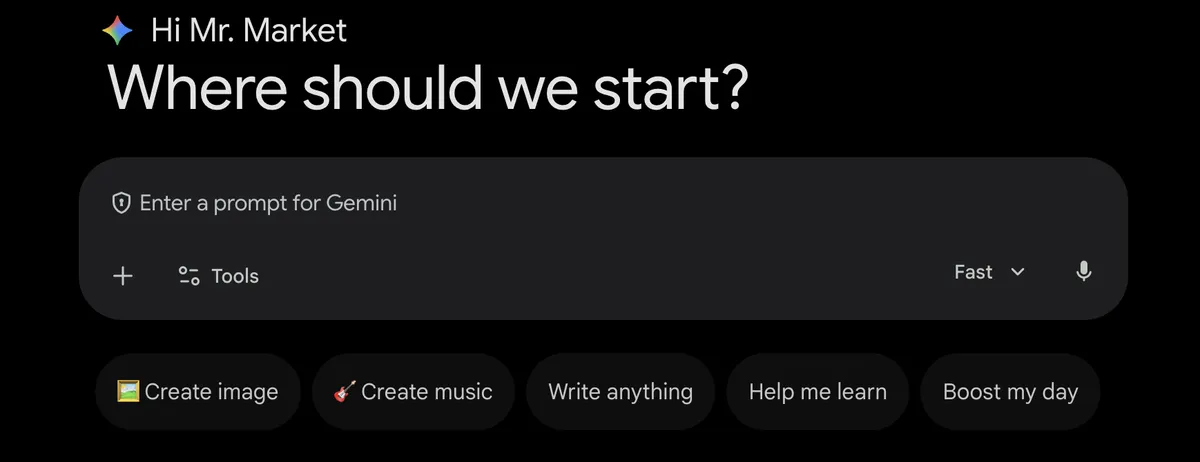

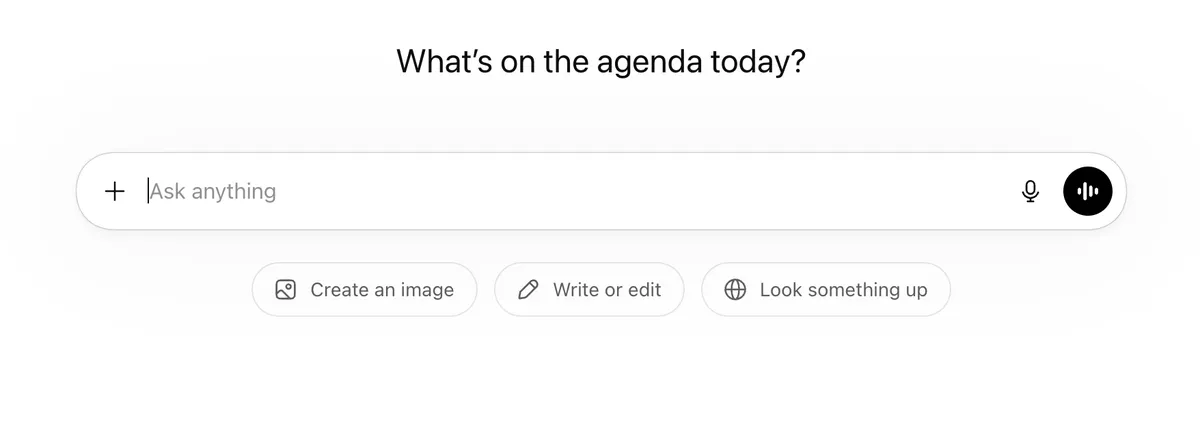

For proof of this, look no further than the OS of the moment:

The experience of using Claude is the same as using Gemini is the same as using ChatGPT (down to where the buttons are placed under the chatbox!). This isn't inherently bad design, but it has destroyed any concept of a moat or a distinctive product feel.

This parity is a big part of why CEOs of frontier models are freaking out all the time. They know switching tools is as easy as switching tabs, so they have to keep saying crazy stuff in the news and trying to bait the market to maintain any kind of lead.

The same is true of Lovable vs. Bolt vs. Base44 vs. Replit. Everything about their product roadmaps has got to be nearly identical. Ask yourself: do you have a strong preference for any one of these tools? Do you know anyone who does?

III. The trust deficit

So we have feature parity and low switching costs. On their own, not ideal. But it's a disaster waiting to happen when you add in the trust deficit in tech.

The mass skepticism and hostility we're seeing from many end users now is the result of tech companies, over years, continually changing the terms of the deal. Users are sick of signing up for a product expecting one experience, then turning around a year later to find everything good has been ruined.

In a market with high switching costs, you can afford to erode trust incrementally because the user has no good exit. The lock-in absorbs the erosion. This is why the old school SaaS playbook worked so well. You'd just acquire customers aggressively and then monetize the trapped user.

But when you have basically no moat and switching is easy, it's a really, really bad idea to work this way.

The ongoing OpenAI/Anthropic face off is a perfect example of the importance of trust in this market. Anthropic positioned themselves as the good guys from the beginning, but after OpenAI cut an unsettling deal with the Pentagon, Anthropic became the people's princess.

For a minute it seemed like a foundation model may finally secure long-term loyalty capable of weathering the arms race.

Then, Stella Laurenzo analyzed thousands of Claude Code sessions and logs and documented what she called a regression "to the point it cannot be trusted to perform complex engineering".

Anthropic first said nothing, then later only admitted to the problem once exposed. And there's still some debate as to whether this was the whole truth. What followed was widespread public subscription cancellations and a trust hole that will take months to fill (if it fills).

In this case, enshittification was only part of the problem. The real betrayal was that Anthropic committed a cardinal sin for a good guy brand: it made a fool of its customers.

IV. Trust failures are now your competitor's best growth engine

Thanks to growth maximalism, the response to a trust violation is now easy and obvious: just cancel and go to a competitor.

When Anthropic's users canceled after the Claude Code incident, they didn't stop using AI coding assistants. They just switched to Cursor, Windsurf, and GitHub Copilot.

Every trust violation in a low-switching-cost market is a customer acquisition subsidy for whoever is slightly more trustworthy at the time. You are literally growing your competitor's user base!

This dynamic renders growth's classic defense incoherent. You can no longer afford to wait to fix things once you're big enough or have enough marketshare.

V. Paying back the trust debt

We lost the customer's trust through a category error.

The argument against infinite growth as a terminal value is not new, but tech ignores it. We behave as if occasionally sacrificing what's best for the customer is acceptable because there are always more new customers to win over. Plus, we can always win back customers we've lost.

What we didn't account for, though, is that we are not drawing down a pool of available users. We're drawing down a pool of trust.

Trust (not on the node-level, but the general background expectation that software companies will behave consistently and communicate honestly) is a commons. It's a shared resource built up slowly through thousands of interactions where companies told the truth when it was costly to do so.

Like any commons, it can be sustained, but only under specific conditions: transparency about the state of the resource, meaningful consequences for those who deplete it faster than it replenishes, and some form of collective input from those affected that is taken seriously.

Growth hacking pretty much violates all of these. It withdraws from the trust commons without disclosure, it faces no meaningful sanctions until it suddenly faces catastrophic ones, and it offers users no role in shaping the relationship.

Naturally, a correction is coming. We are treating customers as captives, not realizing that they can escape now.

People are going to start asking themselves a very hard new question: which company is most likely to protect my interests over time? They'll use whatever data we give them to make this prediction.

VI. How to fix it

So what do you actually do about all this? Here's what I'd do, from easiest to hardest.

Publish your changelog like a newspaper publishes corrections

When something degrades, say so before users figure it out.

This probably seems idealistic and impractical. But I really think this is the most important thing, especially after Lovable just got got by the entire vibecoding community on X.

Ex: In Anthropic's case, had Boris just come out and said they were trying to save compute by reducing thinking depth, people would have been annoyed, and maybe some would have switched to Codex for a week, but they would've come back after Opus 4.7 dropped.

Instead, the entire developer community discovered they couldn't trust their tooling and couldn't trust Anthropic to tell them about it.

Make your commitments costly to reverse

Low-cost commitments are often discounted, and for good reason.

Ex: saying 'we pledge to go paperless by 2050' is a low-cost commitment.

High-cost commitments, on the other hand, are correctly weighted as meaningful good faith efforts to prioritize customer satisfaction over other compelling incentives (like revenue). These pay off big time.

A few examples of high-cost commitments that have created loyalty and profitability that growth hacking can't buy:

-

Hacker News refusing to run ads. If you've spent any time on HN, you've probably thought about how they could easily make a killing running ads. They don't, though, because they want to protect the integrity of their platform. This and their strict moderation practices are the reason HN is the premier news/comment forum in tech.

-

Stardew Valley giving away DLC as free updates to the base game. ConcernedApe, Stardew's solo developer, has easily doubled the size of the base game in added content and free patches since Stardew's release 10 years ago. It's made him a true indie dev darling, and people trust him so much they await his projects with bated breath even years ahead of release.

-

Stripe's refusal to resell transaction data. Stripe processes hundreds of billions in payment volume and has maintained an unrivaled reputation among developers in its category for years. Yet they still refuse to resell transaction data (which fintechs like Plaid publicly do).

Each of these high-cost commitments have been made and kept publicly.

Price for retention, not acquisition

The vast majority of your free tier will churn. The small percentage that converts has to pay for all the compute and labor your free tier consumes. Meanwhile, you're building no loyalty.

Realistic pricing selects for users who have considered the purchase for more than a minute, have a little more skin in the game, really want this to work for them, and are more likely to stick around. They're also more likely to survive the occasional bug or PR gaffe.

This runs directly counter to growth-funnel orthodoxy. It is very hard to defend in a board meeting. But the LTV of a user who paid to try something is almost certainly higher than the LTV of a user who signed up because they heard about it somewhere and it was free.

Aim for memory, not reach

Aggressive distribution has the same problem as the free tier: it pumps up your vanity metrics but it's extremely low ROI over time.

Impressions/views have probably never been less meaningful. Our attention spans are shot and we're being pelted with content 24/7, so even if you do get in front of someone, you're competing against each of the hundreds of pieces of content they saw that day.

Instead, focus on less scalable, more memorable, more opinionated touchpoints. Basically, go narrow and deep rather than wide and shallow.

Think of yourself as a freshman in college. If you do what you think will make you popular, you'll just make the wrong friends. If you just try to be yourself, you'll find your people faster.

Good example of this: PostHog. Their marketing site is delightful to the right people and their GTM strategy is mostly about being funny/'real', which obviously appeals to devs.

Bad example of this: Artisan AI. They're the "Stop Hiring Humans" billboard people. Their CEO did an AMA that was disastrous, likely as another ragebait stunt. Their strategy is 'go viral at any cost'. I'm sure their conversion rate is abysmal.

VII. This could be the beginning of an enjoyable era in tech

Of course, there are effective ways to grow fast. Stripe grew a ton quite quickly and its trust balance grew with it. But we are probably close to running out of growth hacks that are both cheap and effective.

Every cheap trick is effective once, then shared on LinkedIn, replicated by other companies, and eventually treated with skepticism and distrust by any consumer who spots them being used.

Trust, on the other hand, is not susceptible to this pattern.

If someone finds out you're trying to be trustworthy, that is okay. It doesn't hurt. If other people see you keeping your commitments, they can't 'steal' them and win your userbase away from you.

They'll have to slog through their own long investment timeline, accumulate and nurture their own trust capital, and eventually they may be able to play the same game you're playing. But none of that threatens you.

I think seeing companies play the trust game could be a gratifying era in tech. My role, at least, has felt like net good work lately.